Understanding Shannon entropy in cryptography is not just an academic exercise; it is the absolute foundation of modern digital security. Whether you are architecting a B2B SaaS application, encrypting a massive relational database, or generating a decentralized cryptocurrency wallet, your entire threat model depends on the concept of perfect randomness. If an attacker can guess the numbers used to generate your encryption keys, your system is already compromised before the first encrypted payload is even sent.

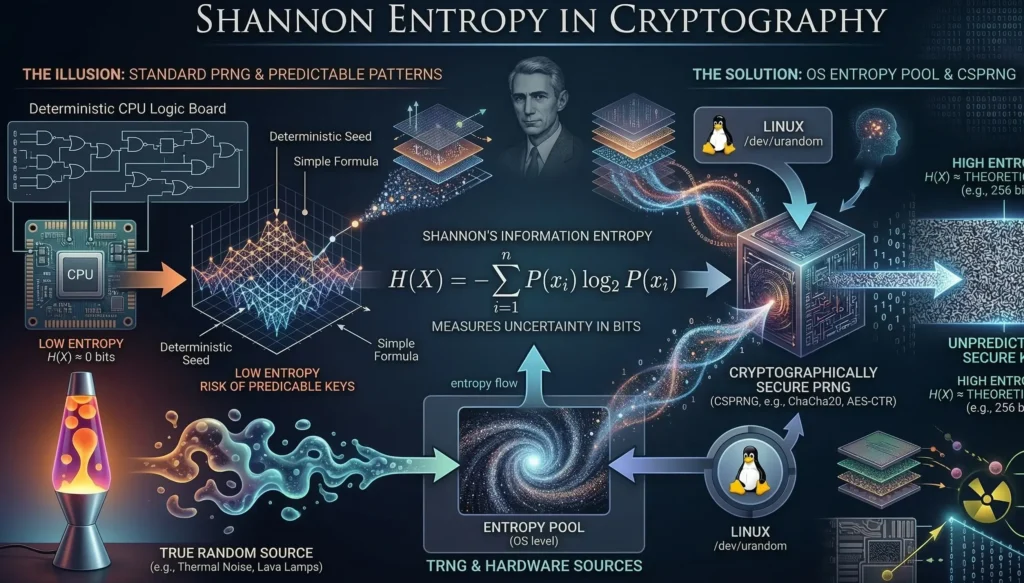

At the absolute core of all modern cybersecurity lies a profound paradox: Computers are strictly deterministic machines. They are engineered to follow precise instructions, executing mathematical operations with flawless predictability. Yet, to secure communications, generate unbreakable cryptographic keys, and protect global financial ledgers, we demand that these highly predictable machines do the exact opposite: produce pure, unpredictable randomness.

When a software engineer fails to understand how a computer attempts to bridge this massive gap between determinism and pure chaos, catastrophic security vulnerabilities emerge. If an advanced persistent threat (APT) actor can predict the random numbers used to generate your SSL/TLS session keys, they do not need to break the complex mathematics of your AES-256 cipher. They do not need massive quantum computers. They simply generate the exact same key you did, and decrypt your data in milliseconds.

In this comprehensive guide, we will dissect the fundamental mathematical foundation of uncertainty. We will explore the critical architectural differences between PRNG vs TRNG (Pseudo vs. True Random Number Generators), examine how a cryptographically secure pseudo-random number generator actually works, and understand why mastering the flow of entropy is the very first step in building impenetrable software systems.

1. The Mathematics of Uncertainty: What is Shannon Entropy?

In 1948, Claude Shannon, the father of information theory, published a groundbreaking paper that quantified information and uncertainty. He introduced the concept of Information Entropy, heavily inspired by thermodynamics.

In cryptography, Shannon entropy measures the unpredictability of a state, or simply put, the amount of “surprise” contained in a piece of data. If a sequence of numbers is highly predictable (e.g., 1, 2, 3, 4, 5), its entropy is close to zero. If every bit in a sequence has a perfectly equal 50% chance of being a 0 or a 1, independent of all other bits, the entropy is maximized.

The mathematical formula for calculating the Shannon entropy $H(X)$ of a discrete random variable $X$ is defined as:

$$H(X) = -\sum_{i=1}^{n} P(x_i) \log_2 P(x_i)$$

Where:

- $P(x_i)$ is the probability of the $i$-th possible outcome occurring.

- $\log_2$ is the base-2 logarithm, meaning the resulting entropy is measured in bits.

For example, a fair coin toss has exactly 1 bit of entropy. However, an encryption key is not a single coin toss. A standard AES-256 encryption key requires 256 bits of pure, unadulterated entropy. If your key generator has a flaw and only utilizes 40 bits of actual entropy (while padding the rest with predictable zeroes), a modern GPU cluster can crack your system in seconds via a brute-force attack.

2. The Deterministic Flaw: The Danger of Predictable Keys

If you ask a standard programming language to give you a random number, you typically use a function like Math.random() in JavaScript or rand() in C. However, these are fundamentally insecure. They are known as Standard Pseudo-Random Number Generators (PRNGs).

Most standard PRNGs utilize algorithms like the Linear Congruential Generator (LCG). An LCG calculates the next “random” number based purely on the previous number, using a strict mathematical formula:

$$X_{n+1} = (a \cdot X_n + c) \pmod{m}$$

Because this formula is completely deterministic, it relies on an initial starting value called a seed ($X_0$). Historically, lazy developers would seed these generators using the current system time (e.g., the milliseconds on the computer’s clock). This is a fatal architectural mistake.

If a hacker knows roughly what time a server generated a password reset token or a cryptocurrency wallet, they can brute-force the exact millisecond seed. This results in the generation of predictable keys. Once your cryptographic keys become predictable, encryption algorithms like AES or RSA offer absolutely zero protection. An attacker simply recalculates the exact same key you generated and decrypts your database instantly. This is why relying on basic math functions instead of true hardware randomness is the number one cause of broken cryptographic implementations.

3. PRNG vs TRNG: The Architectural Divide

To prevent predictable keys, cryptographers divide random number generators into two distinct categories: Pseudo-Random (PRNG) and True Random (TRNG).

True Random Number Generators (TRNG)

A TRNG does not use mathematical formulas. Instead, it measures microscopic physical phenomena that are quantum-mechanically or thermodynamically unpredictable. These physical sources act as an entropy pool.

Examples of hardware entropy sources include:

- Thermal Noise: Measuring the microscopic fluctuations in temperature across a computer’s silicon processor.

- Radioactive Decay: Using a Geiger counter to measure the exact nanosecond intervals between decaying atoms.

- Lava Lamps: Famously, Cloudflare uses a wall of physical lava lamps in their San Francisco headquarters. Cameras record the chaotic, fluid dynamics of the wax bubbles to generate massive amounts of cryptographic entropy.

Pseudo-Random Number Generators (PRNG)

While TRNGs are perfectly secure, they are incredibly slow. Waiting for thermal noise to generate 256 bits of data takes time, and modern web servers need to negotiate thousands of encrypted HTTPS connections per second. This is where we need a hybrid approach.

4. The Ultimate Solution: The Cryptographically Secure Pseudo-Random Number Generator

The engineering solution used by every modern operating system to avoid generating predictable keys is the cryptographically secure pseudo-random number generator (CSPRNG).

A CSPRNG is a masterpiece of modern software architecture. It combines the blazing fast speed of a mathematical algorithm with the unguessable unpredictability of physical hardware. It works by continuously collecting tiny, chaotic bits of physical data from the operating system—such as the exact microseconds between your keyboard strokes, the rotational delay of a physical hard drive, or the arrival times of network packets. All of this chaotic data is securely dumped into a central, OS-level entropy pool.

When a cryptographic application (like a web server generating an SSL/TLS session key) requests random data, it does not invent numbers itself. Instead, it reads directly from a secure system interface, most notably Linux /dev/urandom or /dev/random.

The CSPRNG takes a chunk of true, unguessable entropy from this entropy pool, uses it as a highly secure seed, and then passes it through a one-way cryptographic hash function (like SHA-256 or ChaCha20). This mechanism allows the system to rapidly generate megabytes of secure, unpredictable data without exhausting the physical entropy collected by the motherboard.

Understanding this delicate balance is critical for any backend developer. If you are building a blockchain node or an application dealing with sensitive data, I highly recommend reviewing our previous architectural breakdown on how Elliptic Curve Cryptography mathematically processes these random seeds to generate your private keys.

When evaluating system architecture, the debate between PRNG vs TRNG often leads to a practical compromise. Hardware generators are too slow for heavy web traffic, while standard math functions create fatal vulnerabilities. This is exactly why the cryptographically secure pseudo-random number generator has become the absolute industry standard. It prevents the disaster of generating predictable keys by ensuring the algorithm always has access to fresh, chaotic data.

However, this mechanism only works securely if the system’s entropy pool is sufficiently filled. In high-traffic environments, a server might drain its available randomness faster than the hardware can generate it. Security engineers must carefully monitor the structural relationship between Linux /dev/urandom & entropy pool mechanics. If the pool is temporarily exhausted, the cryptographic output loses its Shannon entropy, and the resulting encryption keys become dangerously vulnerable to mathematical cryptanalysis.

5. Practice: Measuring Entropy in Python

To truly grasp Shannon entropy in cryptography, we must visualize it in code. Below is a Python script that calculates the entropy of any given dataset. A good random number generator should produce an output whose entropy approaches 8 bits per byte.

import math

from collections import Counter

def calculate_shannon_entropy(data: bytes) -> float:

"""

Calculates the Shannon Entropy of a byte sequence.

Maximum theoretical entropy for 1 byte (256 possible values) is 8.0 bits.

"""

if not data:

return 0.0

entropy = 0.0

length = len(data)

# Count the frequency of each byte (0-255)

byte_counts = Counter(data)

for count in byte_counts.values():

# Calculate probability P(x)

probability = count / length

# Apply Shannon's formula: -P(x) * log2(P(x))

entropy -= probability * math.log2(probability)

return entropy

# Test 1: Highly predictable data (Low Entropy)

predictable_data = b"AAAAAAAAAAAAAAA"

print(f"Predictable Data Entropy: {calculate_shannon_entropy(predictable_data):.2f} bits")

# Output: 0.00 bits

# Test 2: Cryptographically Secure Random Data (High Entropy)

import os

secure_data = os.urandom(10000) # Reading from the OS CSPRNG

print(f"Secure Data Entropy: {calculate_shannon_entropy(secure_data):.2f} bits")

# Output: ~7.99 bits

If you ever run an entropy test on your cryptographic keys or session tokens and the result drops significantly below 7.9 bits per byte, your system is leaking predictability and is vulnerable to attack.

6. Conclusion

Cryptography is not just about complex algorithms; it is about the structural integrity of your inputs. A flaw in your entropy pool instantly invalidates the most advanced mathematics on the planet. By understanding the critical distinction between PRNG vs TRNG, and strictly enforcing the use of CSPRNGs like /dev/urandom or modern cryptographic APIs, developers can ensure that their encryption keys remain locked behind the impenetrable wall of true mathematical uncertainty.

In our next module, we will explore what happens when random numbers inevitably collide. We will dive into the mathematics of hash functions and why the infamous Birthday Paradox dictates the exact length of modern cryptographic hashes.

Bądź na bieżąco!

Zapisz się, aby nie przegapić nowości na Review Space.

Join Our Newsletter

No spam. Unsubscribe anytime.