For over a thousand years, military commanders believed their communications were mathematically secure. By replacing letters with random symbols, they created encryption systems with an astronomically large number of potential keys. As we established in our previous module on the substitution vs transposition cipher, simply scrambling the alphabet seemed like an unbreakable defense.

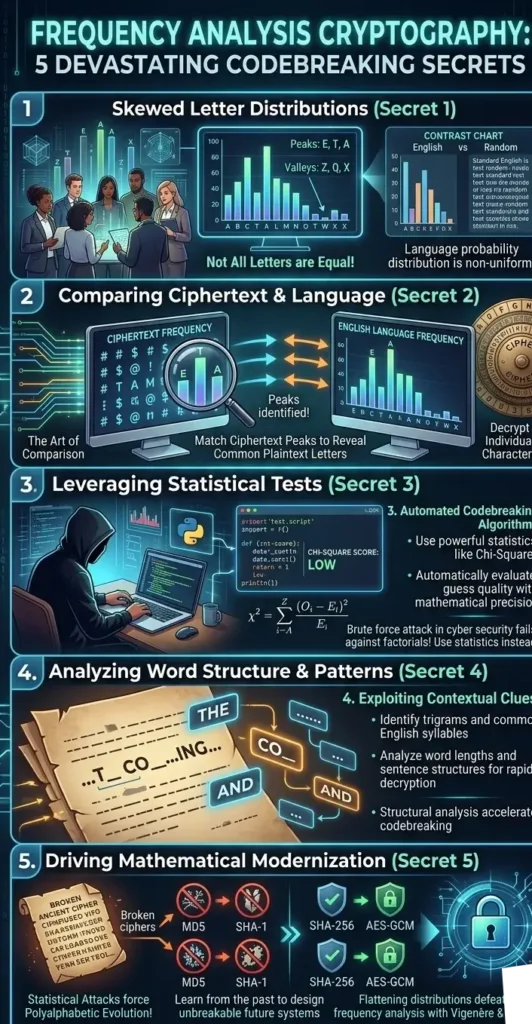

However, in the 9th century, an Arab polymath named Al-Kindi realized something profound: human language is not random. It is governed by strict mathematical probability. This realization gave birth to frequency analysis cryptography, a devastating technique that shattered the millennium-long monopoly of substitution ciphers.

In this advanced guide, we will explore the pure mathematics of codebreaking. We will understand why a modern brute force attack in cyber security fails against a 26-letter alphabet scramble, how to apply Zipf’s law in codebreaking, and how to automate monoalphabetic substitution cryptanalysis using Python and chi-square test cryptography.

1. The Illusion of Security: Why Brute Force Fails

When dealing with historical encryption, a common misconception among beginners is that a standard brute force attack in cyber security is the ultimate weapon. A brute force attack relies on raw computational power to guess every single possible key until the message is revealed. While this works perfectly against a simple Caesar cipher (which only has 25 possible keys), it completely fails against a randomized alphabet.

If a cipher maps every letter to any other random letter, the total number of possible keys is calculated using factorials ($26!$). This creates over 403 septillion possible combinations. Even if you combined all the computing power on Earth, a brute force attack in cyber security would take billions of years to guess the right key. This mathematical wall is exactly why monoalphabetic substitution cryptanalysis was developed. Instead of attacking the key, cryptanalysts attack the predictable linguistic patterns of the plaintext itself.

Beyond analyzing the frequency of single letters, experts analyze entire word structures. By aggressively applying Zipf’s law in codebreaking, analysts know that in any large sample of English text, the most frequent word (usually “the”) will appear twice as often as the second most frequent word. This predictable decay allows attackers to identify linguistic clusters and map out whole sentence structures without needing to guess the cryptographic key.

To fully automate this codebreaking process at scale, modern software relies on variance metrics, specifically chi-square test cryptography. Instead of human eyes guessing words, a Python script can use the chi-square formula to mathematically measure the distance between the letter frequencies of a decrypted guess and the standard English alphabet. The moment the chi-square test cryptography score drops to zero, the computer knows it has successfully shattered the cipher, proving that statistics will always defeat brute force.

2. The Core Concept of Frequency Analysis Cryptography

The foundation of monoalphabetic substitution cryptanalysis relies on a simple truth: if a cipher consistently replaces the letter ‘E’ with the symbol ‘#’, then ‘#’ will become the most frequent symbol in the ciphertext. By counting the symbols and mapping them to standard language statistics, the encryption melts away.

In the English language, letter distribution is highly skewed. According to historical linguistic data, the letter ‘E’ appears roughly 12.7% of the time. The letter ‘T’ appears 9.1%, and ‘A’ appears 8.2%. Conversely, letters like ‘Z’, ‘Q’, and ‘X’ rarely appear, hovering below 0.2%.

To perform manual frequency analysis cryptography, an attacker simply counts the letters in the intercepted message. If the most common letter is ‘X’, the attacker confidently assumes that ‘X’ equals ‘E’. They then look for the most common three-letter combinations (trigrams) like “THE” or “AND”, slowly unraveling the entire text.

3. Zipf’s Law in Codebreaking

While letter frequency is powerful, understanding the behavior of whole words accelerates the decryption process. This brings us to a fascinating empirical rule known as Zipf’s Law, named after linguist George Kingsley Zipf.

Zipf’s Law states that given a large sample of natural language, the frequency of any word is inversely proportional to its rank in the frequency table. The most frequent word (in English, “the”) will occur approximately twice as often as the second most frequent word (“of”), three times as often as the third (“and”), and so on.

$$Frequency \propto \frac{1}{Rank}$$

Applying Zipf’s law in codebreaking allows cryptanalysts to identify word boundaries (if spaces are preserved) or identify common linguistic clusters. By aligning the ciphertext’s word frequency decay curve with the standard Zipfian curve of the English language, the cryptanalyst can confirm they are on the right track during complex monoalphabetic substitution cryptanalysis.

4. Deep Dive: The Mathematics of Chi-Square Test Cryptography

Manual counting is tedious, and when dealing with thousands of intercepted messages, modern cryptanalysis requires rapid automation. But how does a computer program “know” if a decrypted text looks like real English or just random gibberish? It relies on statistical variance, specifically utilizing chi-square test cryptography.

According to the foundational principles of statistics—which you can explore in detail through Wolfram MathWorld’s theorem archive—the Chi-Square ($\chi^2$) test measures the “distance” or “goodness of fit” between an observed distribution and an expected theoretical distribution. In chi-square test cryptography, we compare the observed letter frequencies in our decrypted guess ($O_i$) against the mathematically expected letter frequencies of standard English ($E_i$).

The Core Formula

The fundamental equation used by codebreakers is defined as:

$$\chi^2 = \sum_{i=A}^{Z} \frac{(O_i – E_i)^2}{E_i}$$

Where:

- $O_i$ = The observed count of a specific letter in the decrypted text.

- $E_i$ = The expected count of that letter (calculated as total letters $\times$ standard English probability, e.g., $12.7\%$ for ‘E’).

Hypothesis Testing and Degrees of Freedom

To truly understand chi-square test cryptography, we must frame codebreaking as a formal statistical hypothesis test.

- Null Hypothesis ($H_0$): The decrypted text perfectly follows the standard English letter distribution. (Meaning: We have found the correct key).

- Alternative Hypothesis ($H_1$): The text does not follow English distribution. (Meaning: The text is still encrypted gibberish).

To evaluate this, the computer calculates the “Degrees of Freedom” ($df$). In the English alphabet, there are 26 letters. Therefore, the degrees of freedom are $k – 1$:

$$df = 26 – 1 = 25$$

The Critical Value Threshold

By consulting standard statistical tables, cryptanalysts use a significance level (commonly $\alpha = 0.05$). For $df = 25$ at $\alpha = 0.05$, the critical $\chi^2$ value is approximately $37.65$.

This creates a definitive, programmable decision rule for our automated brute force attack in cyber security algorithms:

- If the calculated $\chi^2 > 37.65$, the variance is too high. We reject the Null Hypothesis. The decryption attempt is incorrect.

- If the calculated $\chi^2 < 37.65$, we fail to reject the Null Hypothesis. The closer the $\chi^2$ value is to $0$, the higher the mathematical certainty that we have successfully cracked the cipher.

This mathematical scoring system eliminates human error and is the precise engine that drives automated frequency analysis cryptography today.

5. Python Implementation: Automating the Cryptanalyst

Let’s write a Python script that utilizes chi-square test cryptography to automatically break a Caesar substitution cipher. Instead of human eyes reading the output, the computer will calculate the $\chi^2$ score for all 26 possible shifts and automatically select the one with the lowest mathematical variance.

import string

# Standard English letter frequencies (expected probabilities)

ENGLISH_FREQ = {

'A': 0.082, 'B': 0.015, 'C': 0.028, 'D': 0.043, 'E': 0.130, 'F': 0.022,

'G': 0.020, 'H': 0.061, 'I': 0.070, 'J': 0.0015, 'K': 0.0077, 'L': 0.040,

'M': 0.024, 'N': 0.067, 'O': 0.075, 'P': 0.019, 'Q': 0.00095, 'R': 0.060,

'S': 0.063, 'T': 0.091, 'U': 0.028, 'V': 0.0098, 'W': 0.024, 'X': 0.0015,

'Y': 0.020, 'Z': 0.00074

}

def calculate_chi_square(text):

"""Calculates the Chi-Square score of a given text against standard English."""

text = text.upper()

letter_counts = {char: text.count(char) for char in string.ascii_uppercase}

total_letters = sum(letter_counts.values())

if total_letters == 0: return float('inf')

chi_square_score = 0

for char in string.ascii_uppercase:

observed = letter_counts[char]

expected = ENGLISH_FREQ[char] * total_letters

if expected > 0:

# Applying the LaTeX formula: (O - E)^2 / E

chi_square_score += ((observed - expected) ** 2) / expected

return chi_square_score

def auto_crack_cipher(ciphertext):

"""Automatically finds the best decryption using chi-square test cryptography."""

best_score = float('inf')

best_text = ""

best_key = 0

for key in range(26):

decrypted = ""

for char in ciphertext:

if char.isupper():

decrypted += chr((ord(char) - 65 - key) % 26 + 65)

elif char.islower():

decrypted += chr((ord(char) - 97 - key) % 26 + 97)

else:

decrypted += char

# Score this specific decryption attempt

score = calculate_chi_square(decrypted)

if score < best_score:

best_score = score

best_text = decrypted

best_key = key

print(f"Cracked! Best Key: {best_key}")

print(f"Chi-Square Score: {best_score:.2f}")

print(f"Plaintext: {best_text}")

# Example Usage

encrypted_message = "Kz xzccjveky tveklip, wzivr vetipgkzfe nrj yrtbvu sp drkyvdzkztj."

auto_crack_cipher(encrypted_message)

When you run this script, the computer doesn’t just guess; it mathematically proves that the decrypted text is English. This script is the foundational blueprint for how modern NSA tools evaluate intercepted data streams.

6. Conclusion: The Polyalphabetic Response

The discovery of frequency analysis cryptography was a cataclysmic event in the history of cybersecurity. It proved that simply hiding letters behind secret symbols was mathematically useless because the structural statistics of human language always bleed through.

To survive, cryptographers had to invent a new system. They needed an algorithm that could artificially flatten the probability distribution, ensuring that ‘E’ was not always replaced by the same letter. This necessity led to the creation of the Vigenère cipher and the era of polyalphabetic encryption, which we will mathematically dissect in our next module.

Bądź na bieżąco!

Zapisz się, aby nie przegapić nowości na Review Space.

Join Our Newsletter

No spam. Unsubscribe anytime.